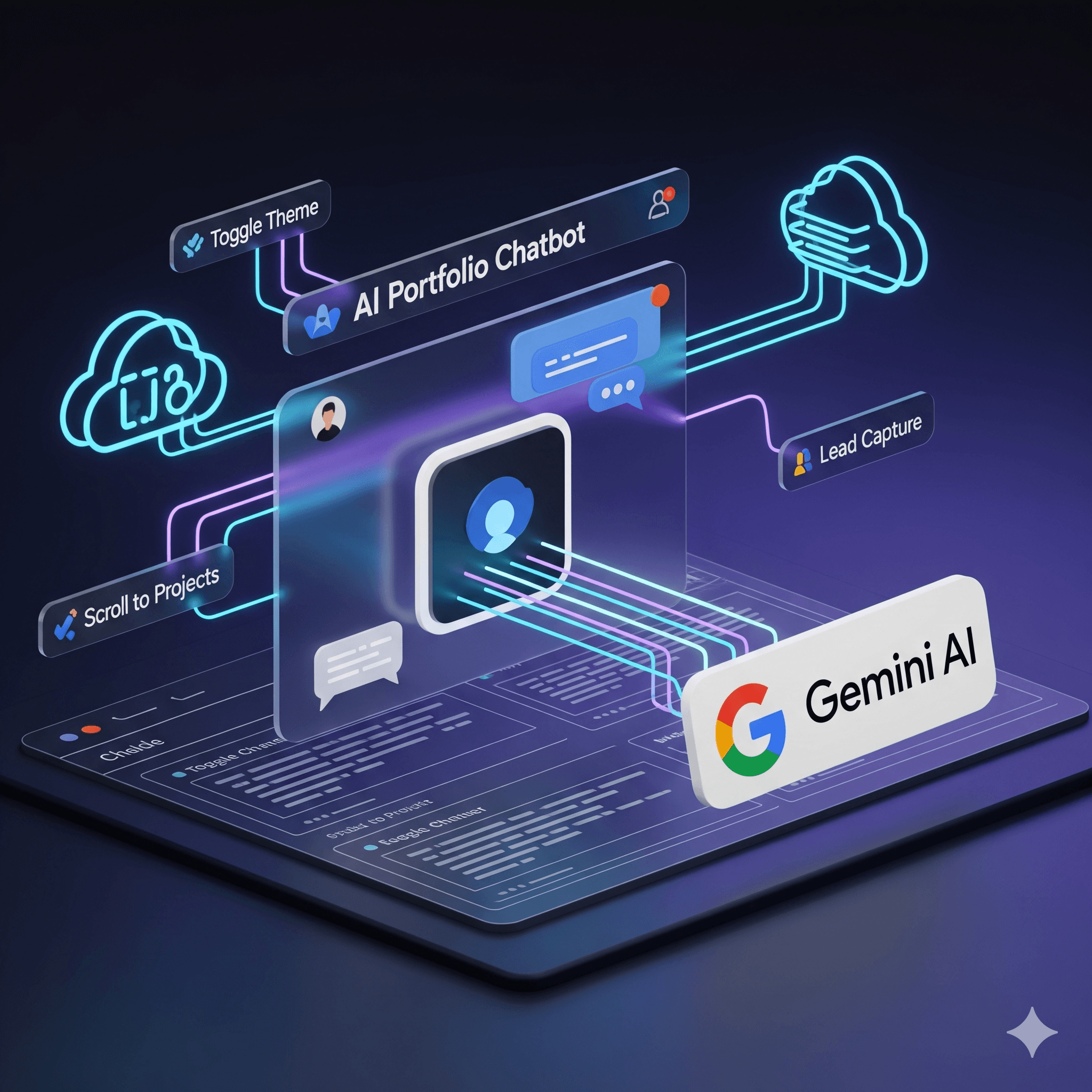

How to Build an AI Portfolio Chatbot with Tool Calling (Gemini + Netlify)

How to Build an AI Portfolio Chatbot with Tool Calling (Gemini + Netlify)

A portfolio that can answer questions, control the page, and capture leads—without exposing your API key—is within reach with a single LLM and a serverless function.

This post walks through how to add an AI chatbot with tool calling to your portfolio: we’ll use Google Gemini for the model, Netlify Functions to keep the API key server-side, and React on the front end. By the end, you’ll have a chatbot that can scroll to sections, toggle theme, filter projects, and collect leads via a webhook.

Why Add an AI Chatbot to Your Portfolio?

A static “Contact” form is fine. An AI agent that knows your skills, projects, and case studies is better.

Benefits:

• Differentiation — Few portfolios offer a conversational, context-aware assistant.

• Engagement — Visitors can ask “What’s his experience with n8n?” or “Show automation work” and get direct answers.

• Lead capture — When someone says “I want to hire you,” the agent can collect name, email, budget, and timeline in a natural flow and send them to your CRM or sheet.

• Learning — You get hands-on practice with LLM APIs, function calling, and serverless security.

The catch: you must keep your API key off the client and give the model tools so it can act (scroll, toggle theme, save lead) instead of only replying with text.

Architecture at a Glance

The setup has three parts:

- React app — Chat UI, message state, and tool execution (theme, scroll, filter, lead).

- Netlify serverless function — Receives chat requests (message + history + tools), calls Gemini with your API key, streams the response back.

- Gemini API — Model + system instruction + tool declarations; returns text and/or function calls.

Flow:

User types → Frontend sends message (and optional history) to /.netlify/functions/chat → Function calls Gemini with GEMINI_API_KEY from env → Stream of text and tool calls returns to Frontend → Frontend renders text and runs tools (e.g. scrollToSection('projects')).

Your API key never leaves Netlify’s server.

Step 1: Keep the API Key Server-Side

Never put GEMINI_API_KEY in client-side code or in a public repo.

Option A – Chat runs entirely in the serverless function (recommended)

The frontend only calls POST /.netlify/functions/chat with { message, history, systemInstruction, tools }. The function creates the Gemini chat, sends the message, and returns the stream. The key stays in process.env.GEMINI_API_KEY on Netlify.

Option B – Key delivery for client-side chat

If you want the chat session to live in the browser (e.g. using @google/genai in React), you need a separate endpoint that returns a temporary key or proxy. A simple approach is a small function that reads GEMINI_API_KEY and returns it only to your origin; you can lock this down by checking Origin or a secret header. Even then, prefer doing the heavy lifting (Gemini calls) in the serverless function so the key is used only on the server.

In the reference project, the frontend fetches the key from /.netlify/functions/env and then creates the chat in the client. For maximum security, moving the chat into the Netlify function (Option A) is the cleanest: the client only sends messages and receives streamed responses.

Step 2: Define Tools (Function Declarations)

Gemini supports function calling: you declare tools with names, descriptions, and parameters; the model can return a tool call instead of (or in addition to) text. Your frontend then runs the corresponding logic.

Example tools for a portfolio:

| Tool | Purpose |

|---|---|

scrollToSection |

Scroll the page to home, skills, projects, etc. |

toggleTheme |

Switch between light and dark mode. |

filterProjects |

Filter the project list by category. |

saveLead |

Send name, email, interest, budget, timeline to a webhook (e.g. Google Sheets, n8n). |

In code, each tool is a function declaration with a name, description, and a JSON schema for parameters. For example, in TypeScript with @google/genai:

const scrollToSectionTool = {

name: "scrollToSection",

description: "Scrolls the page to a specific section.",

parameters: {

type: Type.OBJECT,

properties: {

sectionId: {

type: Type.STRING,

description: "Section ID: 'home' | 'skills' | 'projects' | 'experience' | 'contact'"

}

},

required: ["sectionId"]

}

};

You pass an array of these into the chat config as tools: [{ functionDeclarations: [scrollToSectionTool, toggleThemeTool, filterProjectsTool, saveLeadTool] }]. The model will use them when it decides the user wants to navigate, change theme, filter, or submit a lead.

Step 3: System Instruction: Context and Behavior

The system instruction is where you teach the bot who you are and how to behave.

Include:

• Role — e.g. “You are [Name]’s portfolio assistant.”

• Context — Concise summaries of skills, experience, projects, and case studies (or links to them).

• Capabilities — “You can scroll to sections, toggle theme, filter projects, and save leads.”

• Lead capture rules — When the user shows intent (e.g. “I want to hire you,” “Get in touch”), collect name, email, interest, budget, and timeline one by one; only call saveLead when all five are present.

• Tone — e.g. friendly, professional, concise.

Keep the instruction within token limits. You can build it dynamically from your existing data (e.g. map over SKILL_CATEGORIES, PROJECTS, CASE_STUDIES) so one source of truth drives both the site and the bot.

Step 4: Handle Streaming and Tool Calls in the Frontend

When you call chat.sendMessageStream({ message }), you get an async iterator. Each chunk can contain:

• Text — append to the current assistant message.

• Function calls — e.g. { name: "scrollToSection", args: { sectionId: "projects" } }.

Pseudocode:

for await (const chunk of result) {

if (chunk.text) appendToMessage(chunk.text);

if (chunk.functionCalls?.length) {

for (const call of chunk.functionCalls) {

if (call.name === 'scrollToSection') scrollToSection(call.args.sectionId);

if (call.name === 'toggleTheme') toggleTheme();

if (call.name === 'filterProjects') setProjectFilter(call.args.category);

if (call.name === 'saveLead') await sendToWebhook(call.args);

}

}

}

You can show a short “Done!” message for UI actions (theme, scroll, filter) and a confirmation for lead capture. If you use streaming, render text as it arrives and run tools as soon as a full call is available.

Step 5: Lead Capture via Webhook

The saveLead tool should send data to your backend, not hardcode anything in the client. A simple pattern:

• Webhook URL — Stored in env (e.g. VITE_WEBHOOK_URL) or returned from a small serverless function.

• Payload — Name, email, interest, budget, timeline, and optionally a short conversation summary.

• Receiver — Google Apps Script (Google Sheets), n8n, Make, or any HTTP endpoint that appends a row or creates a CRM contact.

Your frontend (or a small Netlify function) does fetch(WEBHOOK_URL, { method: 'POST', body: JSON.stringify(lead) }). That keeps the chatbot decoupled from your CRM and lets you switch providers without changing the bot logic.

Step 6: Netlify Function for Chat

A minimal Netlify function that proxies to Gemini could look like this:

• Method — POST only.

• Body — { message, history?, systemInstruction?, tools? }.

• Env — GEMINI_API_KEY set in Netlify dashboard.

• Logic — Instantiate Gemini with the key, create a chat with the given system instruction and tools, send the message (and history if provided), then stream the response back (or collect chunks and return once).

If the frontend creates the chat in the client, you can still use a function to send a single message and return the stream so the key stays server-side; the only difference is where the “session” (history) is stored (client vs server).

Takeaways

• API key — Never in the client. Use a serverless function (e.g. Netlify) to call Gemini.

• Tools — Define clear function declarations (name, description, parameters) so the model can scroll, toggle theme, filter, and save leads.

• System instruction — One place for role, context (skills/projects/case studies), and lead-capture rules.

• Streaming — Handle both text chunks and function calls in the same loop; execute tools and optionally show short confirmations.

• Leads — Send to a webhook (Sheets, n8n, Make) so you can change the backend without touching the chatbot.

Building this flow gives you a portfolio that feels alive, captures intent cleanly, and teaches you how to combine an LLM with real-world actions—all with a small, maintainable stack (React, Gemini, Netlify).

Author Note

This article is based on a production portfolio that uses React, TypeScript, Vite, Google Gemini, and Netlify. The same pattern (serverless + tool calling + webhook) applies to other LLMs and hosting providers.